Analysis of Gradient Clipping and Adaptive Scaling with a Relaxed Smoothness Condition | Semantic Scholar

GitHub - JingzhaoZhang/why-clipping-accelerates: A pytorch implementation for the LSTM experiments in the paper: Why Gradient Clipping Accelerates Training: A Theoretical Justification for Adaptivity

Analysis of Gradient Clipping and Adaptive Scaling with a Relaxed Smoothness Condition | Semantic Scholar

machine learning - Gradient clipping in pytorch has no effect (Gradient exploding still happens) - Stack Overflow

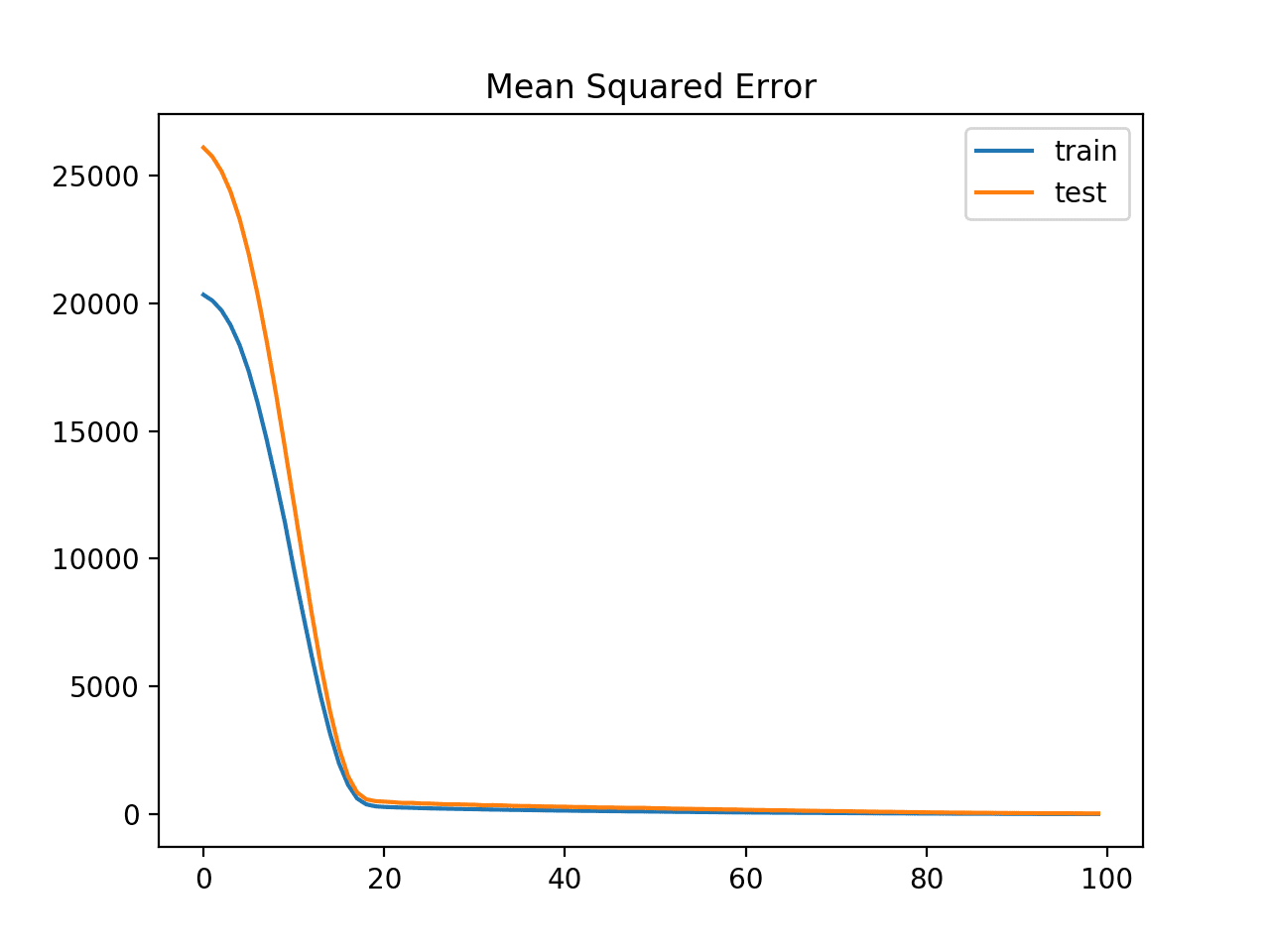

![PyTorch] Gradient clipping (그래디언트 클리핑) PyTorch] Gradient clipping (그래디언트 클리핑)](https://blog.kakaocdn.net/dn/buSTOa/btqM4ywK0Gf/JqcKIyiKTKVWHCCWd59u00/img.png)

![PyTorch] Gradient clipping (그래디언트 클리핑) PyTorch] Gradient clipping (그래디언트 클리핑)](https://blog.kakaocdn.net/dn/bhDouC/btqJnEUH6N4/cHCdmBGndw51Wu8LV9dFt1/img.png)

![PyTorch] Gradient clipping (그래디언트 클리핑) PyTorch] Gradient clipping (그래디언트 클리핑)](https://blog.kakaocdn.net/dn/bvy1vR/btqJs54y1NR/PY3hIolAlOLZxCwI210oN0/img.png)